USER STORIES

Over the past year, conversations within the TAI Authenticity working group have revealed that the exponential growth of generative AI is affecting archives, archivists, and their moving image collections. Inspired by the Library of Congress’ user stories for C2PA implementation in Government and Libraries, Archives, and Museums, the TAI Authenticity working group asked AMIA members to submit user stories to help us better understand specific concerns regarding GenAI as they relate to different roles and types of archives within the community. The stories below come from Authenticity Working Group members and from participants at our 2025 AMIA conference. We continue to seek and welcome new submissions. These user stories offer insightful perspectives that help us create tools and resources that will further our mission of ensuring authenticity, transparency, and trust.

Yvonne – AV archivist at human rights organization

As a human rights archivist, I want to feel assured that the documentation I am collecting is genuine (i.e. it is what it says it is). In my context, I may be uncertain about authenticity because videos are often captured by anonymous or unknown frontline witnesses, and uploaded to social media and chat apps, where they are often re-shared by others. Manipulated and misleading media proliferates on these platforms, including by authoritarian actors with resources to create misinformation campaigns that exploit mistrust and confusion, which undermines my ability to discern what is true.

Besides wanting to describe content accurately in my archive, I need to show some evidence of authenticity / how I determined authenticity when I provide these materials to users. My users may include legal professionals, fact-finders, human rights advocates, journalists, and communities impacted by human rights violations. Some ways that these users may accept media as authentic might be if there is testimony or corroboration from a trustworthy source, if there is an assessment of authenticity from a trustworthy expert, if there are distinctive markers of authenticity in the object itself, or if the process or system that created the documentation is known to ensure authenticity.

Phil – Cataloger and Researcher at a stock footage archive

As a cataloguer and researcher for an archival film company, authenticating moving image collections is an important part of maintaining trust with patrons that seek to view and/or license our collections. It is my responsibility to review footage not yet publicly available for viewing, and provide the proper descriptive metadata elements. Crucially, this includes providing exact or estimated dates, places, and people of note. Trust is important for a small archival film company. If I know the work I’m doing within the company is based on authentic film or tape-based material, then that will provide confidence for any patron that may have questions about, or seeks to license, our material. C2PA, from a technical level, may not be of much use to the collections we already possess, but adhering to baseline standards of authentication would certainly be helpful

Greg – Librarian at a university

As a curator at a university film archive my mission is to support the work of students and faculty by enabling trusted access to the university’s film collections and providing subject matter expertise to guide their inquiry. Few students have experience with moving images beyond smart phones, social media, and YouTube. The GenAI revolution in synthetic imagery threatens to increase the gap between their lived experiences and the material past, a gap that undermines critical thinking and diminishes an appreciation of our democratic ideals.

Introducing students to the concept of media provenance and showcasing to them the unique opportunities they have at our university to interact with and learn from an extensive, global film record of the 20th century has become for me an imperative. Our film archive can support this work but we need new models—relying on streaming our collections from the web as the primary (perhaps only) mode of access will no longer suffice. The ubiquity of Gen AI content (and soon the facility with which convincing historic imagery can be conjured) urges us to create learning spaces in which quality access to archival media for citation, illustration, and reuse in research projects (even reuse in conjunction with Gen AI) regrounds students and faculty in a relationship between the digital objects they manipulate and the material films from which they are derived.

Jennifer – Restoration and Preservation Manager

Working with film and archival collections, I have many concerns about generative AI and making sure our content is authentic. I am interested in non-generative AI tools that can help us restore, preserve, and provide access to more moving image materials

Amber – AV Preservation Librarian

I have several concerns about generative AI within the scope of a preservation librarian. GenAI can bring into question how we authenticate collection materials as genuine. Language within donor agreements and licensing agreements does not yet factor in concerns around generative AI. How Gen AI tools may be used for generating metadata and captions/transcripts for accessibility is a concern. I have ethical questions about uses of materials in the AI context. I am concerned about the technological superiority of a small number of tech companies in connection with authentication tokens like C2PA.

Margaret – Film Archivist

As a film archivist working within Special Collections at a large university library, I am concerned with the possibility of inauthentic reuse of our footage without our knowledge or permission.

Danica – Archivist

As an archivist working with the stories of everyday people, I am concerned about the notion of “findability” seen as an uncritical positive. Who are materials more “findable” for? I am concerned about what discovery tools will exist in 10-20 years, and where that leaves the right to be forgotten. I am also concerned about what gen AI is doing to the environment.

Ilana – Archival Producer

I conduct research for documentary filmmakers, and I’m concerned about accidentally passing on inauthentic content to filmmakers I work for, especially content from stock footage houses.

Nicole – Processing Archivist

As a processing archivist working with personal materials, I am concerned about the privacy and ecological impacts of generative AI. In terms of privacy, donors could be concerned as their personal reputations would be at stake. Furthermore, as AI becomes more ubiquitous, I worry that I may be using AI without knowing it.

This resource is part of a toolkit created by the Trust in Archives Initiative. © 2026. Licensed under the Creative Commons Attribution–NonCommercial–ShareAlike 4.0 (CC BY-NC-SA 4.0) license.

Version 1.0 – April 2026.

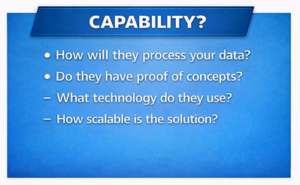

Capability

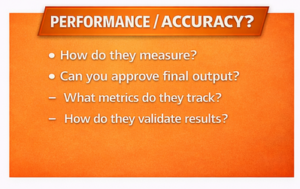

Capability Performance/Accuracy

Performance/Accuracy Privacy/Risk/Security – and More About Credibility

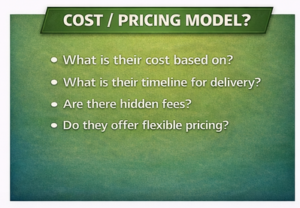

Privacy/Risk/Security – and More About Credibility Potential Cost/Pricing Model

Potential Cost/Pricing Model